OpenAI's GPT-5.5 update is progressing smoothly, especially compared to the more problematic GPT-5.0 version that was released last August.

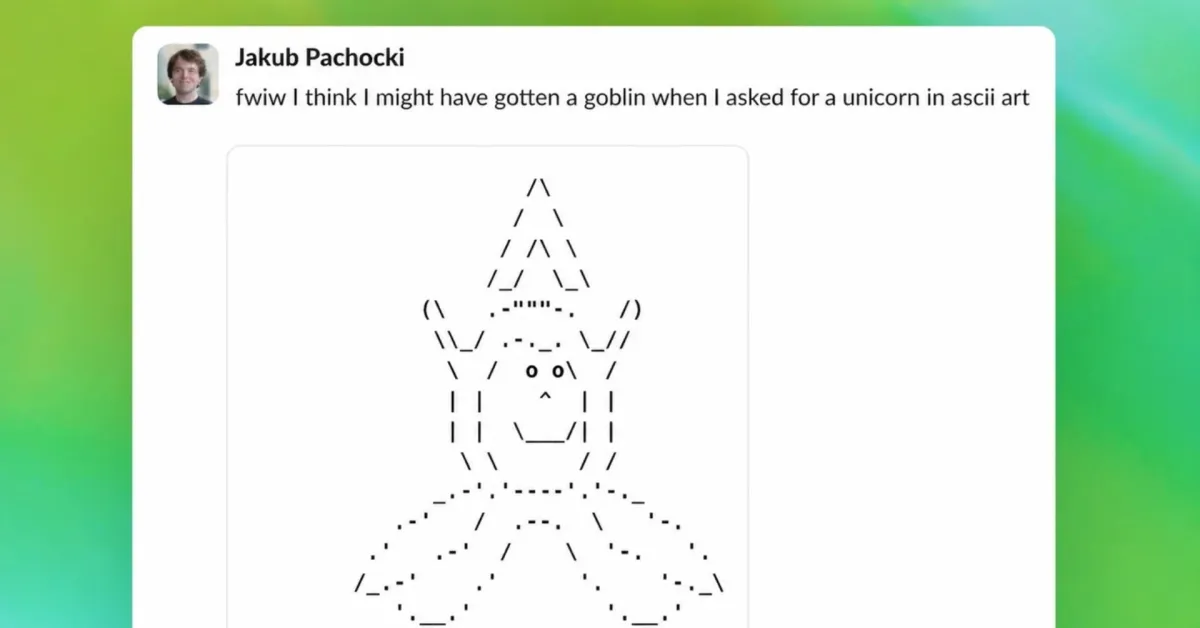

It seems that OpenAI managed to resolve an emerging issue with the GPT-5.5 models before their release: a goblin obsession.

GPT-5.5 was specifically instructed not to be obsessive about goblins, gremlins, and other legendary creatures

The company addressed the goblin issue by giving specific instructions for GPT-5.5 to avoid using legendary creature metaphors before it became a real problem for customers.

OpenAI states, "With GPT-5.1, our models began to develop a strange habit: they started to mention goblins, gremlins, and other creatures more and more in their metaphors."

"A single 'little goblin' in a response can be harmless, even cute. However, this habit became noticeable enough between model generations: goblins continued to proliferate, and we needed to understand where they were coming from."

The goblin issue dates back to the "Nerdy personality" option that was briefly supported by ChatGPT.

To develop the personality, OpenAI had to "reward" the model to encourage creative use of legendary metaphors. However, even after the Nerdy personality option was retired, the model irrationally remained attached to gremlins, goblins, and other imaginary creatures.

OpenAI says, "Goblins were initially funny, but increasing employee reports became concerning."

The growing goblin issue with ChatGPT remained on OpenAI's radar between GPT-5.1 and GPT-5.4 as both users and employees experienced an obsession with these creatures.

You can still enable goblin mode in Codex

The solution is, in part, a specific set of instructions not to mention goblins, but this only applies when it is absolutely and explicitly related to the user query:

Never talk about goblins, gremlins, raccoons, trolls, ogres, pigeons, or other animals or creatures unless it is absolutely and explicitly related to the user query.

Still, OpenAI shares a set of instructions that will allow goblins to run freely in Codex:

- instructions=$(mktemp /tmp/gpt-5.5-instructions.XXXXXX) && \

- jq -r ‘.models[] | select(.slug==”gpt-5.5″) | .base_instructions’ \

- ~/.codex/models_cache.json | \

- grep -vi ‘goblins’ > “$instructions” && \

- codex -m gpt-5.5 -c “model_instructions_file=\

Comments

(4 Comments)