The fourteenth International Conference on Representational Learning (ICLR) is concluding today in Rio de Janeiro. This event wraps up nearly a week filled with presentations, discussions, and research demonstrations from leading artificial intelligence scientists in academia and the technology industry. Here’s what Apple showcased here.

Apple Showcased Numerous Works at ICLR 2026

Although ICLR is not widely recognized by the general public, it has been considered one of the most respected and prestigious conferences in the field of machine learning for over a decade.

This year, it took place from April 23-27 and covered four pavilions and the conference center of the Riocentro Convention Center in Rio de Janeiro. ICLR 2026 brought together machine learning and artificial intelligence experts and researchers from all over the world, from China to India, and from the United States to Europe.

It also gathered major technology companies such as Amazon, Tencent, Google, Microsoft, Ant Group, ByteDance, Huawei, Meta, Salesforce, Shopify, and Apple as sponsors and exhibitors. Firms from Wall Street and the broader financial industry, such as Capital One, Jane Street, and Citadel, also participated in the event.

As Apple announced a few days ago, they set up a booth at the event and showcased an impressive open-source model called apple sharp 9to5mac that transforms 2D images into 3D spaces in just a few seconds. Additionally, LLM extraction in MLX, Apple’s open-source framework for machine learning tasks running on Apple Silicon, was also demonstrated.

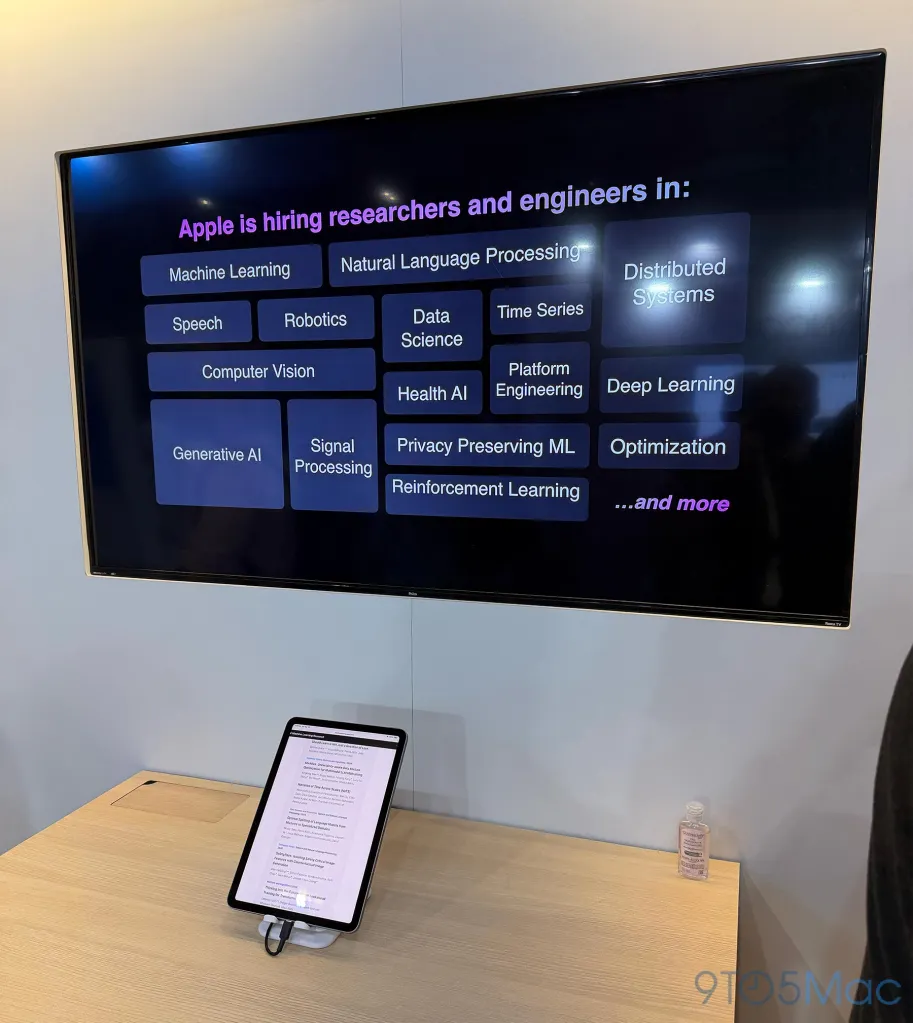

Apple's booth also functioned as a recruitment center; iPads were set up to allow participants to scan QR codes and instantly apply for machine learning positions. This was not unique to Apple; most companies in the exhibition area were also using the event as a recruitment channel for AI talent.

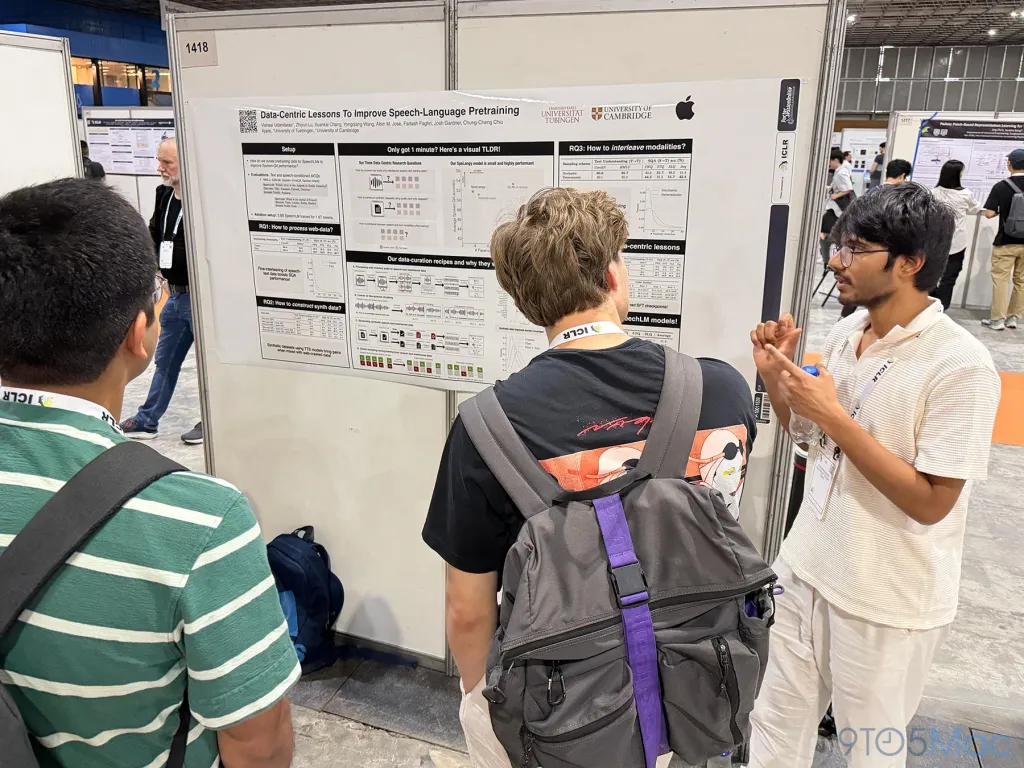

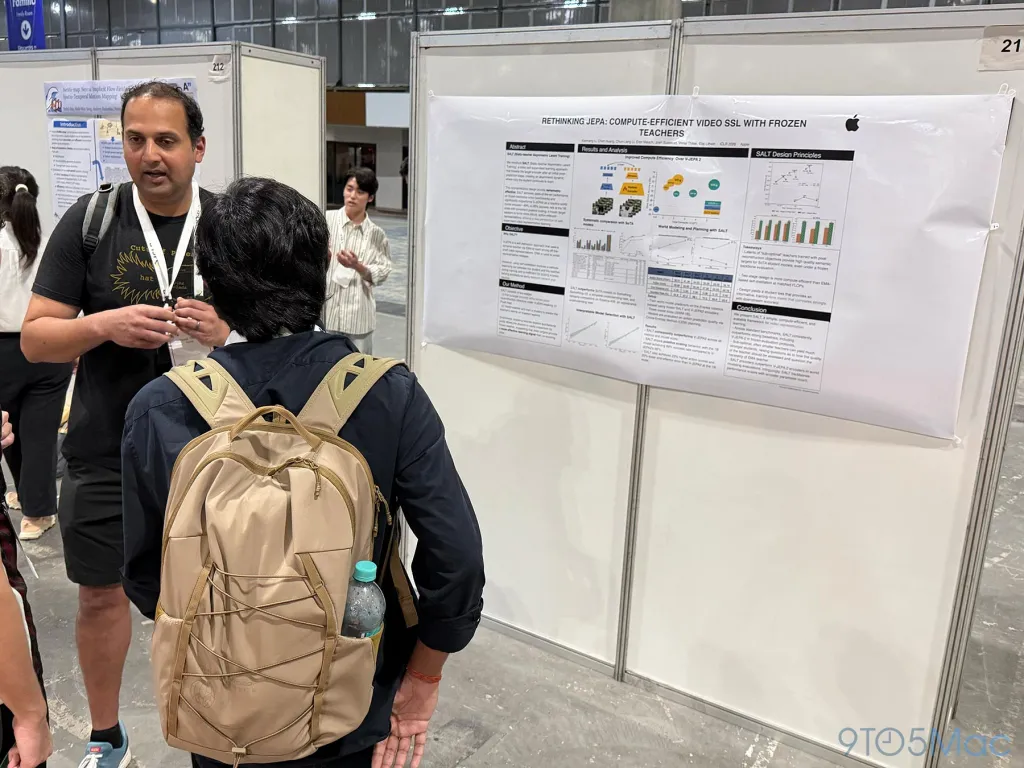

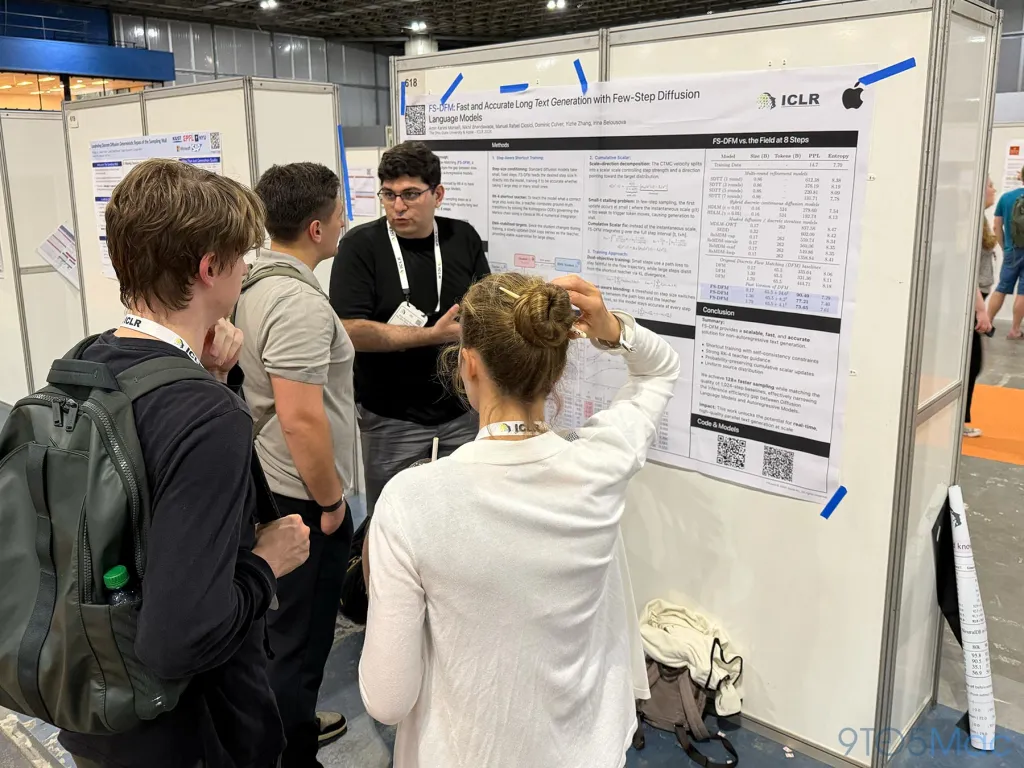

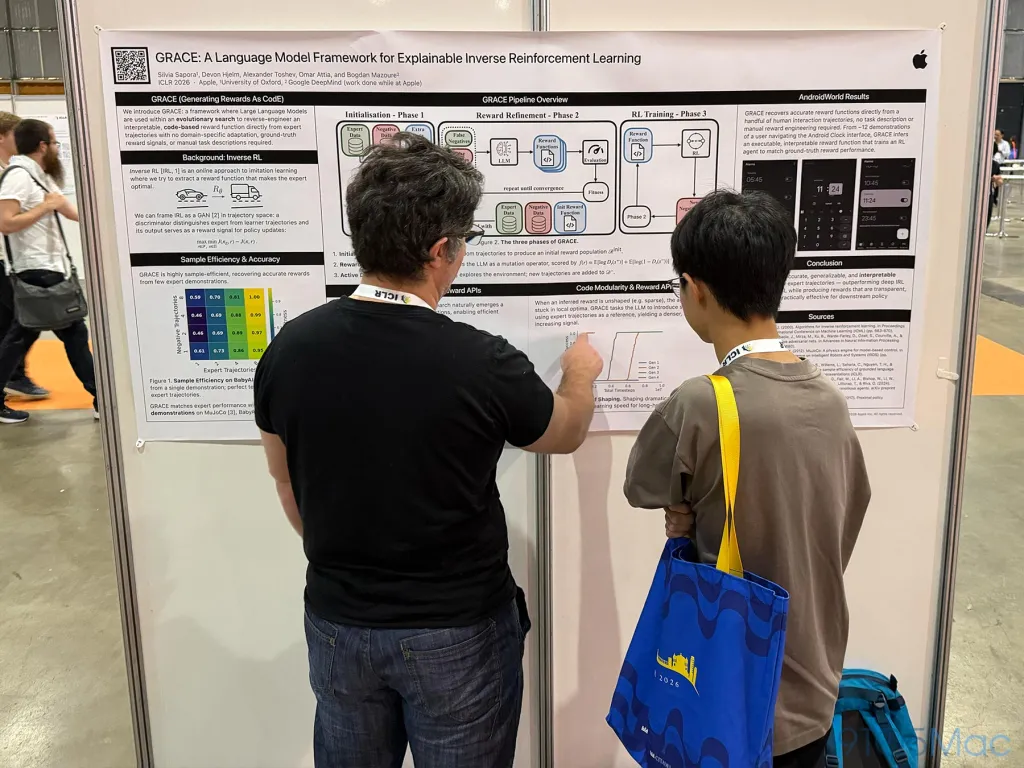

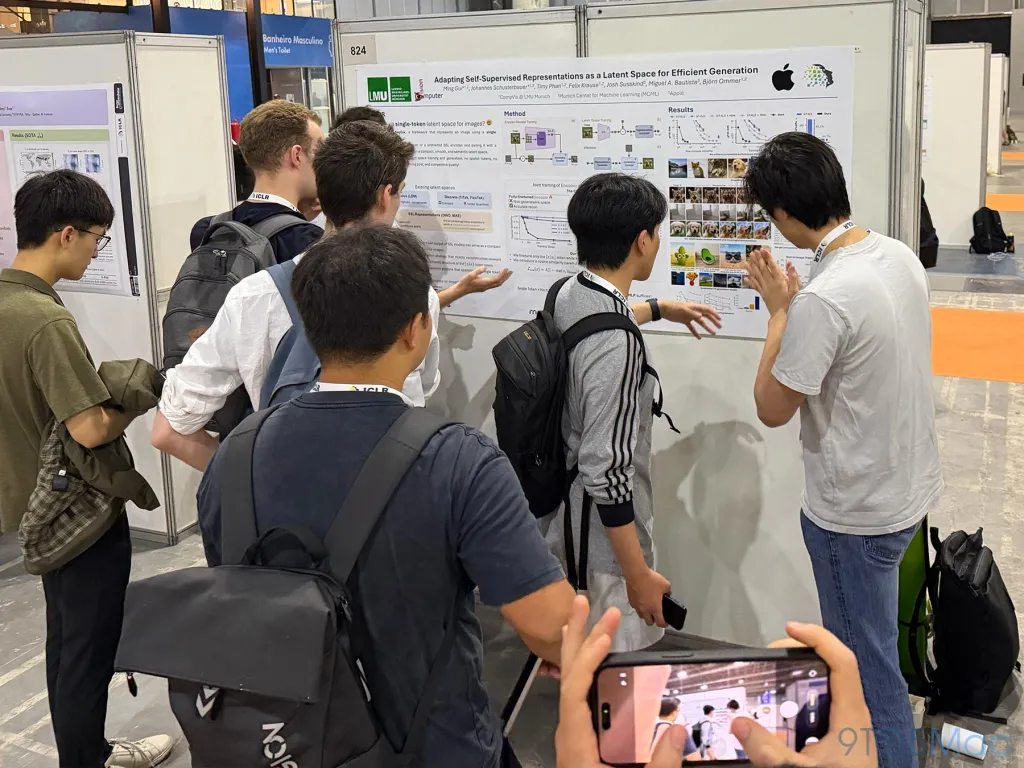

ICLR was also filled with large poster areas where researchers presented their work and answered questions about their studies. During the event, Apple showcased a number of papers that you can find here.

Apple also conducted presentations and workshops on some of the works accepted to the conference. These included "ParaRNN: Unlocking Parallel Training of Nonlinear RNNs for Large Language Models" presented by Federico Danieli and "Learn Less to Fit More: Data Pruning Improves Information Recall" presented by Kunal Talwar.

To learn more about the works showcased by Apple at ICLR 2026, you can follow this link.

Comments

(6 Comments)